1. Subjects

Ciliated sinonasal epithelium was harvested during surgery from patients who had not received antibiotics during a period of three months and had an asthma or allergic reaction. The harvested sinonasal epithelium was stabilized by incubation in transfer medium (DMEM/F12: Dulbecco's Modified Eagle's Medium/Ham's F-12, Gibco BRL, Grand Island, NY, USA), which was added to 1% 10,000 units/mL penicillin and 10,000 ug/mL stertomycin. Harvested sinonasal epithelium was washed using transfer medium for removal of substances like blood wash and was then placed in 0.1% protease (Sigma, St. Louis, MO, USA) into transfer media. It was placed in a 5% CO2 incubator at 37Ōäā for 1 hour.

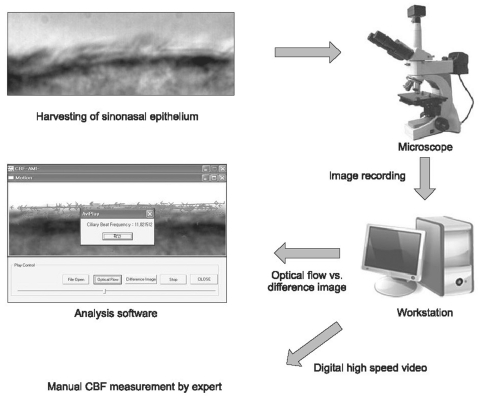

We obtained the recorded 50 ciliated sinonasal epithelium image from the department of Otolaryngology. Ciliated sinonasal epithelium images were recorded at 50-100 frames per second using a charge coupled device camera (Moticam 2000, Motic Inc., Hong Kong, China) from an inverted microscope (Axiovert 200 MAT, Carl Zeiss, Hamburg, Germany) at a magnification of ├Ś1,000, and stored in a workstation.

The application was developed for use with Microsoft Visual C++ 2005 (Microsoft, Redmond, WA, USA). The developed application allowed for automatic measurement of CBF from a stored image, and compared optical flow and differences in imaging techniques.

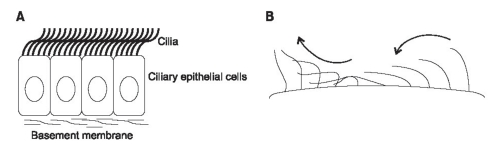

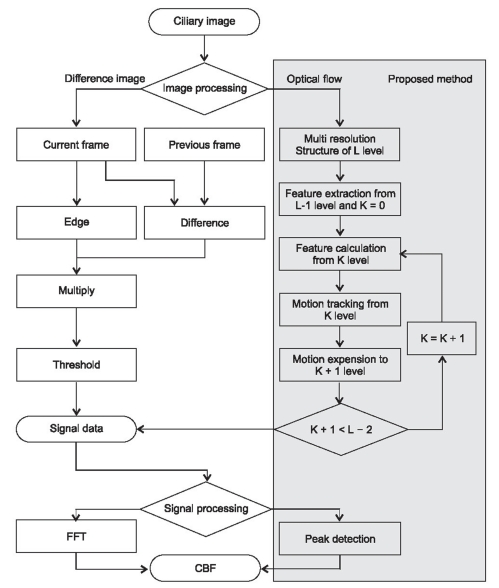

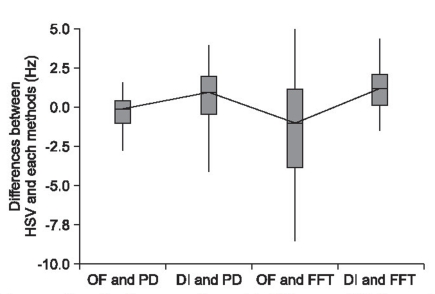

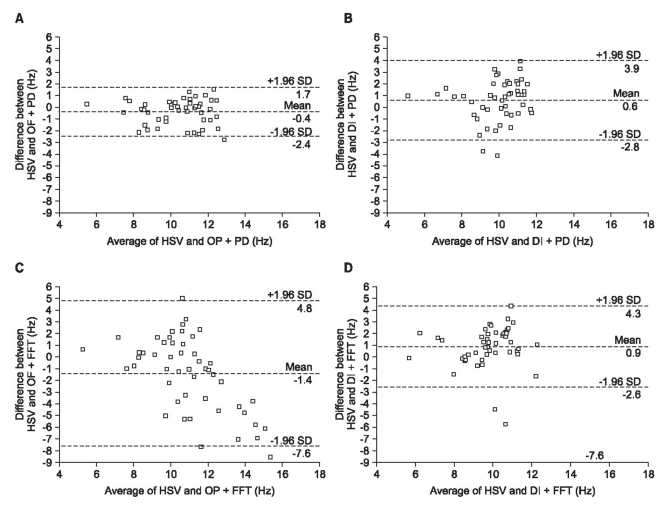

This application involves two stages, which include extraction of features and analysis of extracted features. On feature extraction stage, we adopted two types of preprocessing methods, including image difference and optical flow method (

Figure 3). For analysis of the extracted feature in the previous step, we adopted two methods; FFT and simple peak detection (

Figure 4).

2. Image Processing

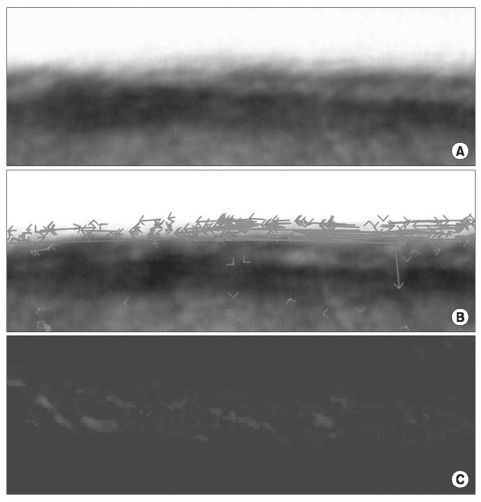

This study applied difference image and optical flow for extraction of features of ciliary motion (

Figure 5). Ciliary motion shows periodic change of pixel brightness in images. This is related to ciliary beating frequency. Frame

K is subtracted from frame

K-1 at

m ├Ś

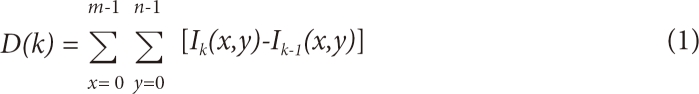

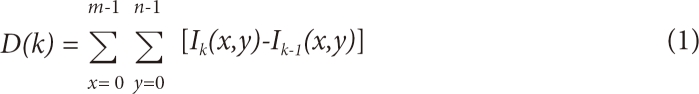

n pixels, and a regular signal by periods is obtained. Difference image method calculated difference of pixel in the previous frame versus pixel in the current frame for all pixels of each block, which were then added for calculation of difference of all pixels. Equation (1) extracts signal from image.

Where, I(x, y) refers to grey level of the pixel of frame K; D(K) is sum of grey level difference of frame K; m, n are image size (pixel).

Optical flow is one of the most general studies of motion recovery approaches. This term was introduced by Horn and Shunch [

11]. They defined optical flow as motion of the imaging surface at changing brightness of 2-D images according to real motion of an object in the 3-D space [

11]. Optical flow field estimated the spatio-temporal pattern of image or signal intensity using an optical flow algorithm. Let

I (x, y, t) be the image brightness at a location

(x, y) and time

t, which changes in time in order to provide an image sequence. Two main assumptions can be made. First, brightness of every point of a moving or static object does not change in time. If the object is moving, investigating the brightness around the object, it can search the moved point of the object according to the first assumption. Second, brightness,

I(x, y, t), is dependent on coordinates

(x, y) in the greater part of the image, called smoothness of velocity. According to two assumptions, function of time and location using continuous change of brightness makes motion modeling. Also, if

K ├Ś

K points around the object move together at object movement, least square search for the moved point (

K is usually 5 or 10 pixels).

Local accuracy and robustness are the most important components of any feature tracker. Robustness is connected with sensitivity of tracking with reference to changes of lighting, size of image motion, etc. Accuracy is connected with the local sub-pixel accuracy attached to tracking. In order to handle large motions, it is preferable to select a large integration window. When choosing the integration window size, there is interchange between local accuracy and robustness. Therefore, we adopt a pyramidal implementation of the Lucas-Kanade algorithm in order to provide a solution to this problem [

12]. The pyramid representation is built in a recursive fashion.

Let L = 1, 2, ... be a generic pyramidal level, and the value of Lm is the height of the pyramid. The following is the pyramidal implementation of the Lucas-Kanade algorithm.

1) Compute the optical flow at the deepest pyramid level Lm.

2) Propagate the result of the computation to the upper level Lm in a form of initial estimates for pixel displacement at level Lm-1.

3) Compute the refined optical flow at level Lm-1.

4) Propagate the result of the computation to the level Lm-2.

5) And so on up to the level 0 (the original image).

This algorithm shows substantially better performance than traditional gradient methods, since it allows for tracking of large feature movements through the image sequence.

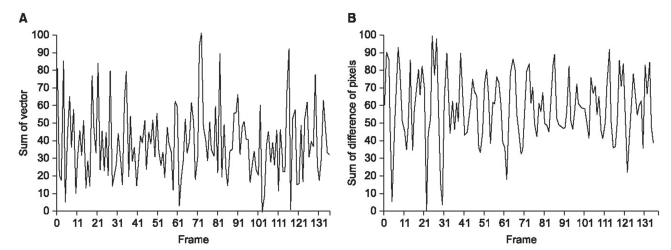

3. Signal Processing

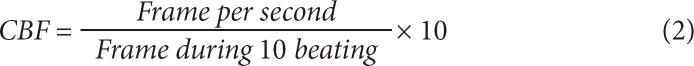

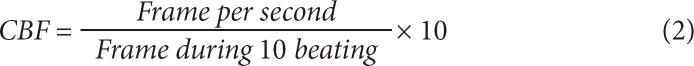

This study used peak detection and FFT techniques for calculation of CBF using optical flow and difference image methods. Both FFT and the peak detection algorithm are the most widely used algorithms for computation of the frequency of signals. The FFT is a much faster algorithm for computation of the discrete Fourier transform (DFT), because it utilizes symmetry in order to reduce operators. The FFT is used in a wide variety of applications, from signal processing to solve partial differential equations to an algorithm for quick multiplication of large integers. The applied high pass filter to FFT analyzes the signal for extraction from the image using AutoSignal 1.7 (SeaSlove, San Jose, CA, USA). As a result of the FFT power spectrum, the frequency representing the highest amplitude was used as the CBF. The calculation of CBF was (analyzed frame number ├Ś frequency ├Ś 2) / recorded image time.

Any continuous periodic waveform presents a variety of useful information, including the beginning and end points of a cycle, the minimum, maximum, or mean signal values within the cycle, and the rate at the repetitive cycle. In many cases, this information is obtained from a quick glance at the waveform and simple calculation. In this paper, we found that one peak height is one ciliary beating cycle. Therefore, the peak detection method is simpler than the FFT. The peak detection method finds a peak of signals over average values with weight calculated by the percentage of the average.

4. Digital High Speed Video

The gold-standard used in this study was the digital high-speed video method, for which CBF is measured manually by an expert observing the video in slow play. This study recruited in a field of at least 2 years. Videos were presented to the observer in a random order with no information given regarding the CBF. Ciliary beating was observed frame by frame. The observer pressed the button of the application, which moves one frame, and measured ciliary beating from the obtained image. The observer measured until 10 cycles, and noted the starting time and ending time of the image sequence. CBF was calculated using equation 2.