I. Introduction

Depression affects millions of people [

1], worsens overall health outcomes, and is a leading cause of disability worldwide [

2]. It is a significant public health concern for which extant interventions are limited in efficacy [

3,

4]. To improve treatments, recent research has focused on the development of biomarkers to better understand the nature of psychiatric disorders [

5]. However, the highly heterogeneous nature of depression has proven to be a consistent barrier for this research [

6,

7]. To address this issue, one common approach involves empirically analyzing large sets of data to identify clinically actionable depressive subtypes.

To this end, researchers regularly employ latent variable models to refine depression diagnoses and create homogenous subtypes [

8ŌĆō

10], which are beneficial because they can function as clear endpoints for biomarker identification. Ideally, biomarkers are associated with several endpoints such as severity, treatment response, or endophenotypes, implying a need for subtypes defined across multiple behavioral metrics [

11ŌĆō

13]. Here, we explore an unsupervised machine learning method for this task. Latent Dirichlet allocation (LDA) is a popular method for identifying abstract topics within text corpora [

14]. In this application, we instead view abstract topics as depressive subtypes and symptoms as text. LDA is a generative probabilistic model; under an LDA model, we determine whether or not a patient has a symptom by:

Generating a mixture of subtypes to represent the patient;

Creating a distribution of symptoms for each subtype;

Choosing a subtype based upon the mixture of subtypes; and

Choosing a symptom based upon the subtypeŌĆÖs distribution.

To generate additional symptoms, we repeat steps 3 and 4. This process is a less natural model for describing symptom data than a more typical latent variable model, but it is more flexible.

The objective of this study was to evaluate LDA as a method of identifying depressive subtypes. LDA models were created with symptom data from a cohort of depressed patients and analyzed to identify potential subtypes. Patient groups were constructed based upon the subtypes and assessed with respect to outcome data. These steps were repeated with latent class analysis (LCA), a widely used latent variable model, to provide a point of comparison [

15ŌĆō

17].

III. Results

1. Clinical Outcomes

Adjusted odds ratios (ORs) are presented in

Table 3, unadjusted odds ratios are presented in

Supplementary Table S4, and HoNOS data are presented in

Tables 4 and

5. Both the LCA and LDA models aligned well with their outcomes. For example, the LDA and LCA psychotic groups were the most likely to have cognition problems, the LDA and LCA severe groups were the most likely to have self-injury problems, and the LDA mild set and the LCA mild group were less likely to have emergency presentations or crisis events.

However, the differences in outcomes between the LDA groups were more variable than LCA groups. With few exceptions, the outcomes for the LCA groups were organized by severity. For example, the LCA mild group was the least likely to have crisis events (OR = 0.27; 95% confidence interval [CI], 0.23ŌĆō0.31; p < 0.001), the severe group was the most likely (OR = 5.26; 95% CI, 4.58ŌĆō6.05; p = 0.01), and the moderate group was in between the two (OR = 0.84; 95% CI, 0.74ŌĆō0.95; p < 0.001). However, the LDA severe group was not significantly more likely to have crisis events (OR = 1.14; 95% CI, 0.98ŌĆō1.33; p = 0.08), though patients in that group were more likely to have emergency presentations (OR = 1.16; 95% CI, 1.16ŌĆō1.29; p = 0.01), had a higher average number of symptoms, and were more likely to have self-injury problems.

The differences in outcomes tended to be smaller within the LDA groups than in the LCA groups. For example, although the LDA and LCA mild groups were the least likely to have problems with depressed mood, the range within the LDA groups was only 7.1% compared to 28.2% within the LCA groups (LDA, between 46.3% and 53.4%; LCA, between 43% and 71.2%). The LCA and LDA groups contained similar numbers of patients.

2. Model Comparisons

The two methods categorized mild and psychotic individuals in a similar way. Seventy-seven percent of individuals who were in the LDA mild set (the mild, agitated, and anergic-apathetic groups) were placed into the LCA mild group; 89% of individuals that were in the LCA psychotic group were placed into the LDA psychotic group. The LCA moderate patients were placed into the LDA groups, excluding the psychotic group, almost evenly: 29% were placed into the severe group, 21% in the mild group, 18% in the agitated group, and 24% in the anergic-apathetic group. However, the placement of LCA severe patients into the LDA groups was less intuitive. LCA severe patients were placed into both the LDA severe and agitated groups at relatively high proportions (29% and 33% of the time, respectively).

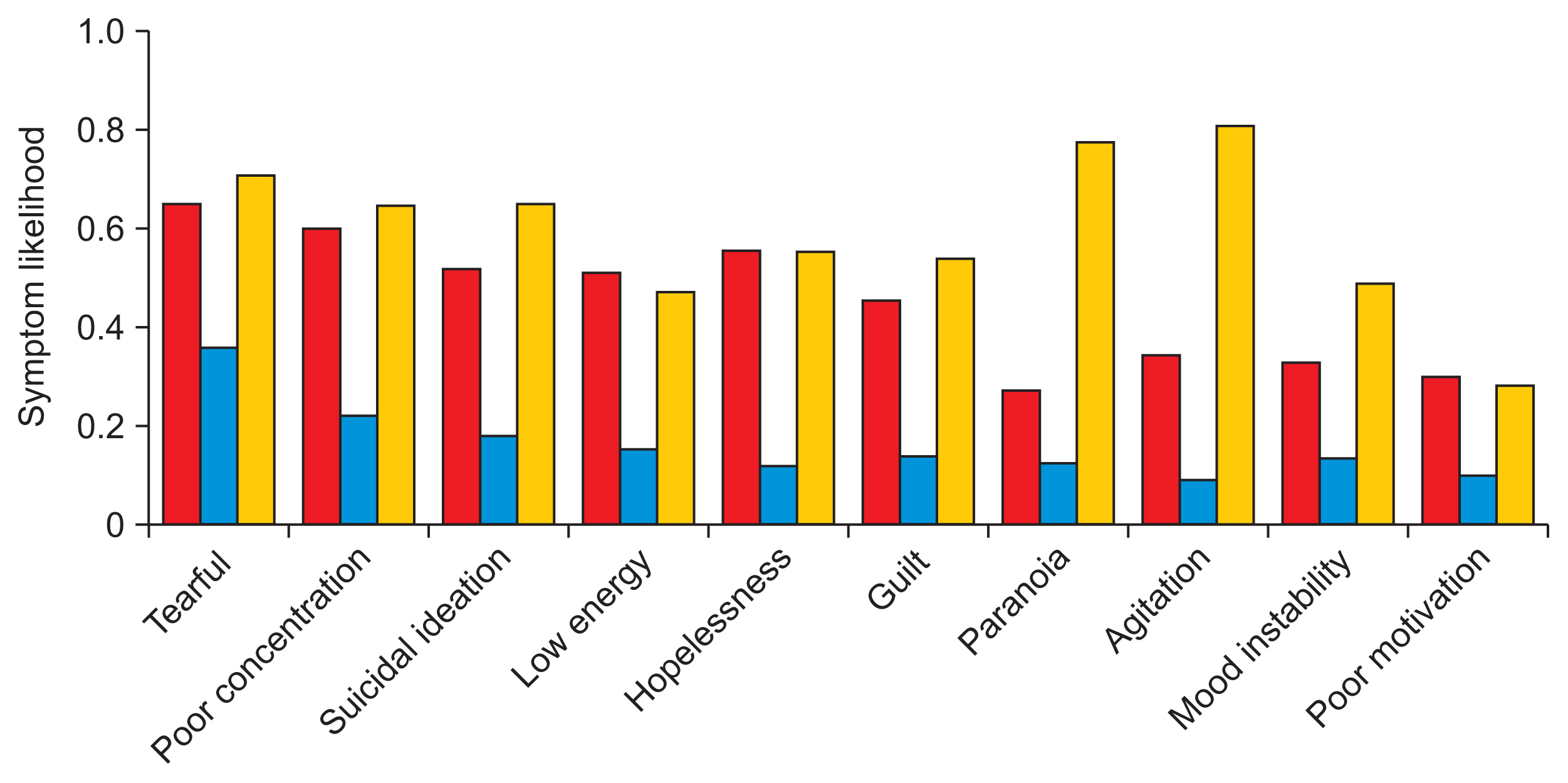

Because LDA produces a distribution of symptoms, it is not possible to make a direct comparison between the symptom likelihoods in the LCA and LDA subtypes. Instead, in

Figures 4 and

5, we present LDA symptom likelihoods as the likelihood that a patient would have that symptom if we were to generate the average number of symptoms for the group the patient is in. More information can be found under ŌĆ£Generating symptom likelihoods from LDA modelsŌĆØ in

Supplement B.

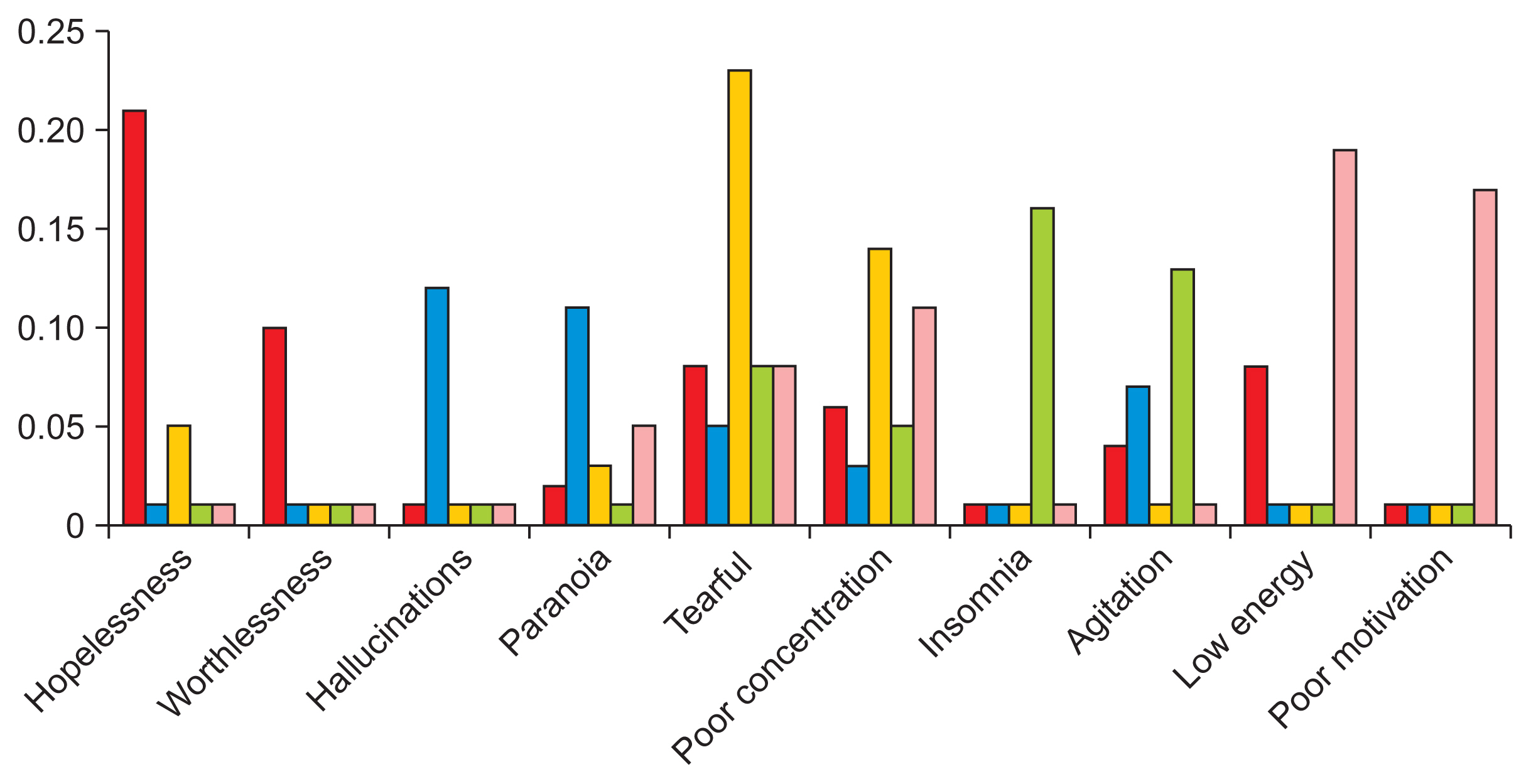

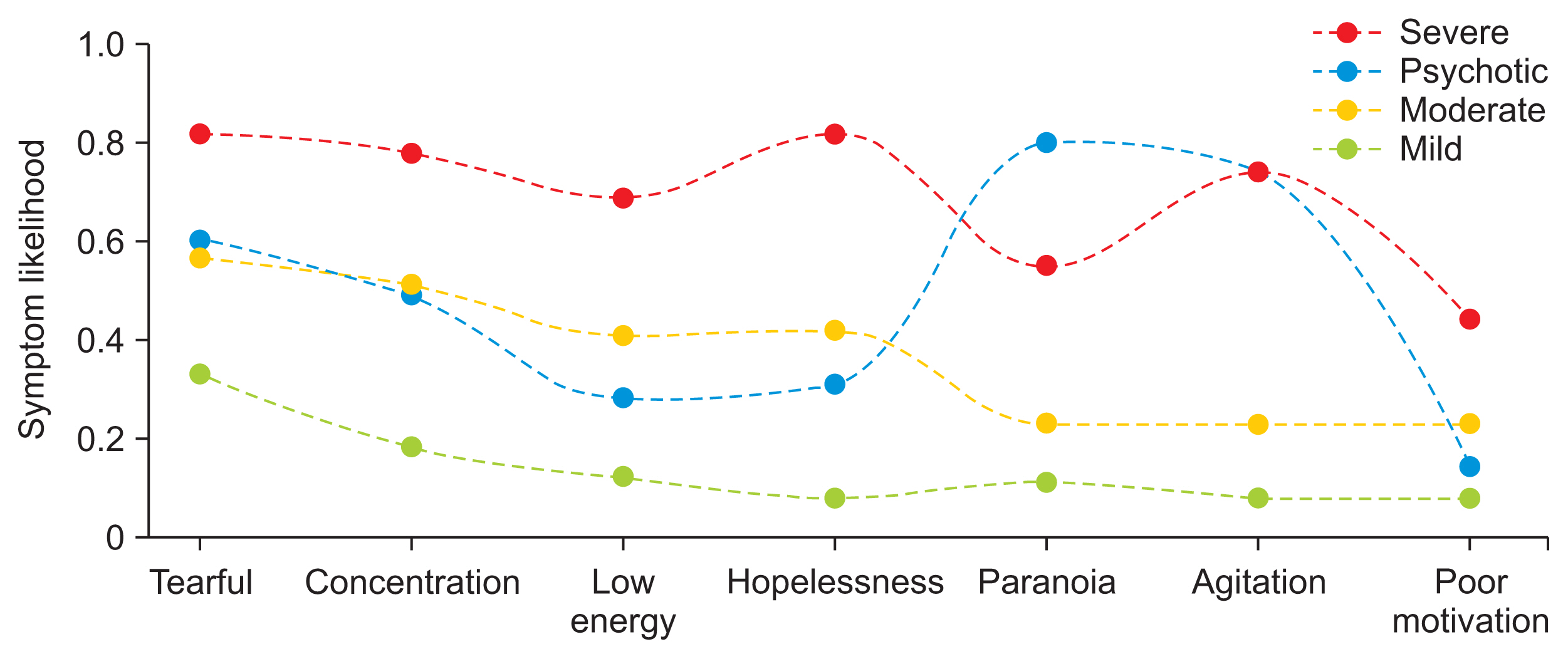

The LDA subtypes could be differentiated by two or three key symptomsŌĆöthat is, if a symptom was highly likely in one subtype, it was not likely to be present in other subtypes, with some exceptions. For example, as shown in

Figure 4, the LDA psychotic and agitated subtypes were both likely to be described as agitated. This contrasts with the LCA subtypes, which largely followed the same pattern as the outcome data, with a clear stratification by the overall likelihoods of symptoms.

IV. Discussion

In this study, LDA and LCA were used to identify two sets of depressive subtypes based upon patientsŌĆÖ symptomatology. For each method, several models were evaluated. The final models created subtypes that were coherent with respect to various outcomes. However, they differed significantly in their relationships to the data. The LDA subtypes were characterized by qualitative descriptions, whereas the LCA subtypes were clearly stratified by severity; the prevalence of different outcomes was ordered precisely from mild to severe, with a few exceptions related to the psychotic subtype.

Empirically, stratification by severity has been a common trend in similar work employing LCA [

8,

9]. Outside of severity, classes are most clearly characterized by one or two key symptoms. For example, Lamers et al. [

16] identified moderate, severe melancholic, and severe atypical sets by analyzing Diagnostic and Statistical Manual of Mental Disorders (DSM) criteria data. The latter two groups were primarily differentiated by weight and appetite changes. There were other statistically significant differences, but they did not distinguish patients to the same extent; instead, issues would be similarly probable, such as less sleep (0.515 vs. 0.388) or fatigue (0.964 vs. 1.000). One potential explanation for this would be the limited set of symptoms considered in the DSM criteria and depressive measures broadly. However, these issues persisted in this study among all LCA models despite the inclusion of a wider range of symptoms.

LDA departed from stratification by severity; the classes were naturally characterized by 2 or 3 unique symptoms according to the model. The differences in outcomes were less clear than those in the LDA model, but this may have been, in part, due to the even numbers of patients across groups. For example, patients in the LCA moderate subtype were spread across the LDA subtypes, potentially making group outcomes more difficult to distinguish. However, for every class, the LDA model was able to prioritize clusters of symptomsŌĆöthat is, the most important symptoms in each subtype were significantly overrepresented in the corresponding patient group. This is a departure from the results of LCA models. Only a few symptoms, mostly associated with psychosis, were unrelated to severity in the LCA model, whereas there was little overlap in the most important symptoms in the LDA classes.

Supplementary Table S3 presents more information on the LDA classes.

The observation that the LDA models characterized patients by qualitative characteristics and the LCA models classified patients by severity is in line with the assumptions made by each method. For example, the fact that the final LDA model produced qualitative descriptions is unsurprising, given that it is a topic model. In latent variable models, symptoms should be independent within classes. Yet, with current depression criteria, if a class is extremely likely to have two or three symptoms, then from a clinical perspective, it is to be expected that other symptoms are present [

24,

25]. Here, the LCA model likely reconciled these conditions by assigning high likelihoods for every symptom [

26]. There is a need to develop new methods for deriving data-driven depressive subtypes; the findings of the present study suggest that to do so, shifting assumptions could be effective.

There are several limitations to this study. First, the data source was a secondary mental health services provider, which may include more varied cases of depression. For example, patients (and symptoms) in the most severe subtypes, such as psychotic patients, may not be present at the primary care level, where depression is often first treated. In the most extreme example, a general practitioner might not record a single symptom related to mental health. Another consideration is whether mental health treatment is a priority for the patient or the provider. Although mood and anxiety disorders are commonly comorbid with other chronic conditions, mental health may not be discussed because the patient would prefer to focus on a separate treatment, such as a chemotherapy session. Thus, the analysis performed here would certainly yield different results in other outpatient or inpatient settings.

Second, the variables used in this study are not directly comparable to prior works. Psychiatry researchers prefer to use validated, structured depression measurement tools [

27], which collect data on specific symptoms and their severity tied to a specific timeframe (commonly 2 weeks). In comparison, our symptom data was based upon whether a clinician recorded a symptom; there were no guarantees about severity, timeframe, or symptom choice. Information on common symptoms, such as low mood, lack of interest, anergia, may not even have been available because a clinician chose not to write about it. Nonetheless, the trade-off allows for the discovery of new, novel subtypes because additional data, such as bereavement or mental health history, can always be incorporated if resources are dedicated to their extraction, whereas measurement tools are commonly limited to 20 or fewer symptoms.

The factors that contribute to replicability issues constitute another key limitation. These include the lack of analysis of a separate data set and the variability of latent variable studies. For example, the demographics of a population are important because patientsŌĆÖ ethnicity is known to affect their diagnosis, introducing bias to any data-driven analysis [

28]. Furthermore, for latent variable studies, the number of latent variables is subject to the analystŌĆÖs discretion. While theoretically motivated guidelines exist, there are always cases where

n and

n+1 classes are valid options [

15].

This study explored LDA as a method of identifying subtypes of depression within a large set of symptom data. Our results suggest that LDA is a promising method, particularly because it surfaces subtypes associated with multiple outcomes that can be distinguished by a unique set of observable symptoms. In other words, patients were characterized by clear descriptive criteria that correspond to actionable clinical insights. This contrasts with previous studies, which have typically produced subtypes characterized by severity; that is, the subtypes tended to center the prevalence of symptoms in general as opposed to observable syndromes. To confirm that our results were not just a function of our data, we tested a commonly-used method as a point of comparison and found that it also produced subtypes stratified by severity. Several broad classes of future work might help refine depressive subtypes such as exploring broader measures, like functional assessments, or extensions of LDA, such as applications to raw text data. By identifying more homogeneous groups of patients with depression, these findings could support the creation of clinical decision support tools or downstream depression research for biomarker development.